Analysis of Sculpting the Air (2010-2015) by Jesper Nordin

Introduction: context, concept and methodology

About the composer and his project

Jesper Nordin is a unique figure in the contemporary music landscape. With a background in rock and electronic music, he composed his first works by ear, using sequencers, drum machines and electronic keyboards. It was only at age 20 that he undertook formal study of music theory. Nonetheless, the need to remain active as an experimental musician led him to regularly improvise alongside musicians practicing a diverse range of styles (to this day, this is a key element of his creative process). His work as an improviser included the creation, starting in 2007, of his own set of compositional tools. Nordin describes his background, in which classical music theory and the use of real-time electronics co-existed, as follows:

Even though I regularly compose works for orchestra or ensemble, I always make recordings with musicians and then use them to construct maquettes with a digital sequencer, which I then transcribe. Over time, I came to focus more and more on these maquettes, and less on the score itself […] I like to be able to intuitively control the sketches for my scores, as I would in an improvisatory setting, without the end result necessarily being a comprehensive score. I have seen many people working with tactile surfaces and tablets in order to produce sounds in different ways. It struck me that I could use MIDI messages to generate music by creating a new “instrument” over which I would have complete control. Therefore, I created a patch in Max/MSP in 2007 in which I could organize pitches and durations into a grid; however, as I did not want to be limited to a single instrument, the stylus had to allow me to control an entire orchestra. [Jesper Nordin, 10 novembre 2014, Arch. Ircam – Bacot-Féron, notre traduction]

Co-commissioned by IRCAM/Centre Pompidou and GRAME as part of the INEDIT Project, and funded by the French National Research Agency (Agence Nationale de la Recherche) with the support of Statens Musikverk—Music Development and Heritage, Sweden, Sculpting the Air is as much an artistic project as it is the culmination of scientific research. The originality of this work—premiered by Ensemble TM+, conducted by Marc Desmons, at Maison de la Musique, Nanterre on 13 June, 2015—lies in the fact that the physical gestures of the conductor serve to control the electronics through the use of a complex technological setup conceived by the composer and created with the assistance of computer music designer, Manuel Poletti. Based on the idea of creating a concerto for conductor, Sculpting the Air is the first part of a trilogy for which interactive tools were developed to explore, both musically and visually, the concept of “exformation.”

Exformation

“Exformation” is a notion introduced by Danish science-fiction author and “popular science” writer/lecturer, Tor Nørretranders in his 1992 book, Maerk Verden (translated into English as The User Illusion). In this book, the author proposes that messages communicate not only information, but also “exformation,” i.e., information that is not intentionally and explicitly transmitted. Thus, exformation lies in the context in which information is transmitted; it is omnipresent and always contains meaning. To illustrate this idea, Nørretranders gives the example of Victor Hugo, who, while away on holiday following the publication of Les Misèrables, sent a letter to his publisher to inquire about the reception of his book. The letter text contained only the punctuation mark “?.” The response from his editor was simply “!.”

Hugo’s use of the question mark was the result of a voluntary rejection of information. It was not a simple omission, as if he had forgotten relevant information; on the contrary, it deliberately referred to information that was not included, even if, from an epistolary point of view, that information had been omitted. In The User Illusion, the term “exformation” is used to describe information which is explicitly excluded. A message has depth if it contains an abundance of information. If a person, while formulating a message, deliberately excludes information such that it is not explicitly transmitted, then he/she generates “exformation.” It is not possible to quantify the exformation in a message based on its contents alone; only the context in which the information is transmitted can be used to make such an assessment. The author of the message formulates it such that it reflects the information that he/she has in mind [Nørretranders, 1998, p. 92].

Nordin cited the example of Victor Hugo’s correspondence with his publisher on numerous occasions to explain the genesis of the concept which inspired his project. In Sculpting the Air (sub-titled Gestural exformation), the composer sought to highlight both the information and exformation expressed through the movements of the conductor:

Exformation […] encapsulates everything that is not explicitly stated but which is present in our minds in verbal interactions or before formulating communication, whereby the information comprises a quantifiable, demonstrable discourse that we will articulate. The movements of the conductor include numerous exformations which obviously differ in nature according to the role of the recipient (musician, listener, etc.). The modest amount of information which may be measured in the conductor’s physical gestures perfectly demonstrates the transmission of an abundance of musical exformation. In this work, we took the commonplace gestures of the conductor and recontextualised/expanded them, causing them to have novel effects [Nordin, 2015].

In this trilogy based on the concept of exformation, Nordin wished to “decentralise the electronics by decoupling them into several modules, instead of just one” [Arch. Ircam—Composer’s Note of Intention, 2014, Projet Nordin STA TM + presentation, shared by C. Béros]. As such, the musicians would have a visual representation of the process of controlling the electronics, and notably, the real-time processes contained therein. In Sculpting the Air, the first part of the trilogy, the conductor’s movements contain information in the traditional sense, i.e., showing the tempo and pulse to the performers; however, they also control the electronics and activate audible sounds, thereby making their “exformational” dimension explicit. While maintaining “standard” conducting techniques, Nordin causes the conductor’s movements to transcend their traditional role, endowing them with new meanings which are no longer destined to communicate only to the instrumentalists. In Visual Exformation, the second part of the trilogy (premiered on 4 October, 2016 by the Diotima Quartet, to whom it was dedicated), the four musicians control the electronics, including a lighting array that surrounds them, with their movements. Part III of the trilogy has not yet been completed, but the work, to be premiered by Ensemble Recherche, will be titled Interactive Exformation.

Analysis of the Creative Process

The methodology applied in this analysis to outline the creative process of Sculpting the Air is based on documents and data collected during work sessions which took place in blocks between August 2014 and June 2015 [Bacot and Féron, 2016]. This corpus, created in situ, sheds light on the creative impulses which occurred in a technological setting, making it possible to dissect the decisions that informed the development of the movement-tracking technologies and retrace the steps that made the work’s existence a reality, from the first written proposal to the premiere. Apart from the first session with conductor Nicolas Agullo, who was unable to continue work on this project due to scheduling incompatibilities, all work sessions were video recorded, allowing us to create a thorough archive of the work’s creation a posteriori. Meetings between the composer and the conductor to discuss recent progress also regularly took place.

Beyond analysing the work itself (in the traditional sense), the present text intends to shed light on the collective creative process, in which the performance parameters were continually adjusted based on tests and discussions among the relevant parties. In other words, this analysis reveals how Nordin developed the technologies and musical materials through collaborations with Poletti, Desmons and the musicians of Ensemble TM+. The computer music designer (CMD) is responsible for the technical realisation of a work, as well as the sustainability of its performance, from the development of software for analyses and real-time treatments to the adaptation of the performance tools for possible future performances [Zattra and Donin, 2016]. The conductor, as the main “user” of the motion-tracking technology, was naturally obliged to collaborate intimately with composer and the CMD in order to make proposals and clarify his role in performance. The same may be said, albeit to a slightly lesser degree, of the musicians in the ensemble. Focusing on the genesis of Sculpting the Air, and discussing the various technological, gestural and notational elements which were born through its creation, this analysis hopes to encapsulate the dynamic, collaborative nature of the creative process behind this work.

The Gestrument and ScaleGen Software

Background

One of the singular technical aspects of this work lies in the fact that it is based on technologies developed by Nordin himself: the Gestrument and ScaleGen software. These programmes are the result of a process of adaptation, development and creativity, lasting several years and forming the technological basis of the Exformation trilogy. Before detailing the functionalities of these programmes, it is important to briefly discuss their genesis in order to better understand the roles that they played, both in the compositional process and in the conception of new forms of interactive technology.

Gestrument was initially conceived by Nordin as an aid to composing, i.e., by generating reservoirs of material, as opposed to performing tasks related to the use of electronics. In 2007, Nordin composed Undercurrents, commissioned by GRAME. The piece used a tablet device with a stylus to control a MaxMSP patch, allowing the user to improvise on various musical scales. A demonstration of this is available, recorded during Nordin’s residency at GRAME in 2011 while he was working on Frames in Transit [Media 1]. The interface used in the demonstration formed the basis of the gestural mode of interaction which the composer pursued through the development of Gestrument; that software was commercially released in 2012.

Media 1. Improvisation on a microtonal scale with a Wacom tablet. This approach was initially developed by Nordin in 2007 at GRAME (Lyon) with the support of Christophe Lebreton [© EV Grame, 2011].

Gestrument

In 2012, based on the code in Max for his computer-assisted composition programme, the composer, with the assistance of developer Jonathan Liljedahl, finalised a version of Gestrument for use on mobile/touch-screen devices running iOS. The idea of this application was to explore the “musical DNA” of songs, artists or genres such as metal, techno, Indian classical, jazz or trance. It allowed users to connect devices via a MIDI link, making it possible to, for example, associate certain timbres with the data generated by the system. In 2018, Gestrument became “legacy” software—meaning that it was no longer available for download or updated/supported; it was superseded by Gestrument Pro. As the premiere of Sculpting the Air took place in June 2015, Gestrument, and not Gestrument Pro, was used in the work’s creation.

The application is controlled via a tactile surface on which durations are mapped on the x axis and pitches on the y. The user generates material by swiping his/her finger across the screen; the resulting pitches and durations correspond to those that were “drawn” on the screen. The position of the finger on the screen triggers the playback of acoustic (sampled) or synthetic sounds; it is possible to adjust the number of voices from one to eight. The instruments have different pitch, duration and volume characteristics, which can be varied according to the position of the finger on the screen (the “multitouch” feature had not yet been incorporated). If the finger remains immobile, the note or chord is placed in a loop according to a pre-determined duration and tempo. Transients are not re-triggered if the “pulsation density” mode is deactivated, making it possible to perform legato passages. In this way, the user is able to improvise with pre-defined combinations of scales, timbres, durations and dynamics, and to save the result or recall a given parameters. As such, musical material can be generated based on the point of contact of the finger on the screen [Media 2].

Media 2. Official trailer of Gestrument showing the basic functionalities and modes of use [© EV Gestrument, 2014].

In Frames in Transit (2012) for orchestra and the Trespassing Trio (Daniel Frankel, violin; Niklas Brommare, percussion; and Nordin himself on electronics), the composer used, for the first time, not a tactile screen but a Kinect motion sensing device to track his movements and control the input to Gestrument. The composer again made use of this type of setup in Sculpting the Air. Two Kinects were used to create motion-tracking spaces in which the “exformations” of the conductor were performed. This key idea was therefore the fruit of a long period of reflection on both the musical dimensions of the work itself, but also on the optimisation of the technology used to interact with the electronics. At the beginning of his work on the Exformation Trilogy, Gestrument was a mainstay of Nordin’s creative process.

ScaleGen

A separate part of the MaxMSP code created by Nordin gave rise to the creation of ScaleGen, a second application dedicated exclusively to the creation/editing of musical scales. This software allows the user to precisely define pitches (in cents or frequencies) and construct non-octavian scales [Media 3]. Scales produced in this way can be played back directly in the application, exported in various formats, or played on virtual instruments controlled by the MIDI or OSC protocols. It is simple to import such a scale into Gestrument and use it as the basis for an improvisation.

Media 3. Demonstration video of ScaleGen, revealing the easy-to-use nature of the software as well as its complementarity with Gestrument [© EV ScaleGen, 2014].

The collaborative creative process

Production schedule

The creation of Sculpting the Air took almost an entire year, from August 2014 to June 2015. In this time, Nordin undertook four periods of research at IRCAM (each lasting two weeks), during which he collaborated closely with the CMD and conductor on the conception and creation of the ad hoc technology used for motion tracking. This technology had to operate in a contactless manner, and be as ergonomic and stable as possible [Bacot and Féron, 2016].

First session (August 2014)—Improvising to generate material and define the requirements of the technology.

Nordin arrived at IRCAM in August 2014 with the material he had used in Frames in Transit two years earlier, i.e., an iPad and a Kinect device. He also brought some small porcelain Chinese bells. Discussions with the CMD were essentially focused on the possibilities offered by various motion-tracking technologies and on the feasibility of creating of a “home-made” system. As the technological/instrumental configurations used in Frames in Transit had proved to be reliable, it was decided that the same technologies should be adapted for use in Sculpting the Air. In order to crystallise the idea of a concerto for conductor, and to identify the possibilities (and impossibilities) inherent to the use of this technology, tests were carried out with conductor, Nicolas Agullo, i.e., triggering sounds via the aforementioned miniature bells which were suspended in the Kinect motion-tracking space, looping of instrumental motifs (in the form of MIDI files), and improvisations on a microtonal scale (inspired by Swedish folk music) using Gestrument.

It was also at this time that Nordin generated material, in the form of MIDI files, by improvising with different physical gestures or using the miniature bells as pendula which periodically entered the Kinect motion-tracking space. Gestrument and ScaleGen are not only performance tools; they can also be used to generate sounds. In this regard, improvisation is, again, a key part of the process, as it allows the user to explore an auditory space intuitively and gesturally while also avoiding the pitfalls of imitating other composers or repeating oneself [Arch. Ircam – Bacot-Féron, 13 November, 2014]. Using ScaleGen, the composer first created a set of unique microtonal scales, some of which were, according to Nordin, derived from Swedish folk music. These scales were then imported into Gestrument and served as the bases of improvisations which, in turn, gave rise to the creation of a reservoir of motifs and harmonies. The audio and MIDI output from these improvisatory sessions were conserved to facilitate the subsequent extraction or alteration of material produced in this manner.

Following this preparatory session, the composer wrote a short text in which he outlined the conceptual basis of his trilogy and identified the key movements that the conductor would have to perform in Sculpting the Air: “1) Conduct in a traditional manner; 2) Control the electronics (using a Kinect or Leap Motion device, and/or directly on the iPad screen); and 3) Manipulate physical objects (i.e., several small bells suspended on an ad hoc stand) with the hands.” [Arch. Ircam—Production, 2014]. With the technological basis for the real-time electronic treatments thus having been defined, Nordin could set to work on composing the score.

Second session (November 2014)—Testing real-time effects by simulating the movements of the conductor.

This session saw the first meeting between conductor Marc Desmons and Nordin and Poletti, who explained to him the nature of technology and the role of the bells in this piece. The latter were to be oriented either towards the Kinect devices and used as pendula [Figure 1a] or towards the conductor himself, whereby their motion could dictate tempi [Figure 1b]. At this time, the motion-tracking materials were relatively rudimentary: the stands on which the miniature bells were to be suspended were incomplete, and the conductor observed that he would require a visual monitor to position himself correctly in the motion-tracking space. Although the instrumentation, the form and the sound materials had already been clearly defined by the composer, the first draft of the score included little more than the temporal organisation (number of bars, time signatures, tempi), with only a handful of partially composed musical fragments. In contrast, numerous MIDI extracts of what the musicians would perform had been exported, allowing the conductor to experiment, by “miming” the beat, with controlling the real-time electronics. This process, which might be characterised as “karaoke for conductors,” made it possible to:

- adjust the positions of different parts of the setup (music stand, two stands holding the miniature bells, two Kinect devices);

- evaluate the feasibility and degree of precisions of the conductor’s gestures as a means of controlling the electronics without endangering clarity for the performers; and

- begin to examine the question of how to notate the conductor’s gestures in the score.

Figure 1. Marc Desmons (left), Jesper Nordin (middle) and Manuel Poletti (right), 12 November, 2014 in Studio 5 at l’Ircam [© Arch. Ircam – Bacot-Féron].

Third session (February–March 2015)—first tests with the musicians of Ensemble TM+.

In the previous session, the conductor found that it was impossible to rely on his ear alone to determine whether he was interacting with the electronics as intended, i.e., if he was moving correctly and within the motion-detection space. As such, a computer with two screens was set up to allow him to see whether his hands and the small bells were within the prescribed space [Figures 2a and 2b]. Rather than developing a MaxMSP patch to undertake all of the electronic treatments, it was decided that Ableton Live would be used, in the interest of flexibility. Although still incomplete, in the third session, work on the score had progressed such that the conductor could practice controlling the electronics while “miming” beating along to excerpts from the score, played back in MIDI. First rehearsals of parts of the score with some of the members of Ensemble TM+ were also organised, which was a major step towards achieving the conditions that would be in place when the work was complete [Figure 2c]. During this session, Nordin also worked in the studio with Florent Jodelet, the percussionist from TM+, recording samples (grand piano, tam tam and bass drum, as well as various other objects including springs, chains and a metal beam) which would later be used in the electronics [Figure 2d].

Figure 2. a) and b) Marc Desmons “miming” the beat to control the electronics via motion tracking with Kinect devices (IRCAM—Studio 5, 24 February, 2015). c) Experimentation with the setup involving some of the members of Ensemble TM+ (IRCAM—Studio 5, 26 February, 2015). d) Recording session with percussionist Florent Jodelet (left), Jesper Nordin and sound engineer Martin Antiphon (with backs turned towards the camera), 5 March, 2015 in Studio 2 at l’IRCAM [© Arch. Ircam – Bacot-Féron].

Fourth session (June 2015)—Rehearsals with the ensemble and premiere

Nordin completed his score in April and sent it to the conductor. During the final work session, the piece was first rehearsed in its entirety at IRCAM by Desmons using MIDI instrumental playback. The first rehearsals with the musicians from Ensemble TM+ took place at Maison de la Musique, Nanterre, where the work was premiered on 13 June, 2015 [Figure 3]. During this session, discussions were no longer centred on technical/technological aspects, which were largely finalised, but rather, on the interpretation of the work and the optimal assimilation of the real-time electronics.

Figure 3. a) Rehearsal with Ensemble TM+, 10 June, 2015 at Maison de la Musique, Nanterre. b) Mixing desk, etc., located at the back of the hall [© Arch. Ircam – Bacot-Féron].

Research of Movement

The software development kit of the first model of the Kinect (1414) allowed developers to use the visual and motion data sent by the device. In Sculpting the Air, the movements performed by the conductor in the motion-tracking space were detected by the Kinects, and the corresponding digital data (pixels and their coordinates) were sent to Gestrument. Using GestrumentKinectConverter (a piece of ad hoc software developed for this project), data from the Kinects were transformed into MIDI values, which Gestrument could then interpret. Coupled to this programme, Gestrument was able to control not only virtual instruments but also the real-time electronics.

Rather than using two touchscreens, the piece used two three-dimensional, cubic spaces in which physical gestures could be performed to interact with the electronics. The Kinect devices relayed the movements of the conductor to GestrumentKinectConverter, which assigned them to points on the screen of the Gestrument user interface. In this way, the conductor could both lead the ensemble and enter/exit the motion-tracking space while continuing to conduct, revealing to the audience the reciprocity between his performative gestures and the playback of sounds or control of the parameters of various electronic effects. The role of the conductor in this piece is, to some extent, “contaminated” by this ability to interact with electronics, and vice versa. The two tablet devices, each running a separate Gestrument session, were positioned at the centre of the control station at the back of the hall. An on-stage computer screen allowed the conductor to monitor his movements inside the motion-tracking spaces [Figure 2a et 2b].

Two factors led to rehearsals being undertaken in the absence of the instrumentalists in the second work session. First, this allowed Desmons to practice the various movements (sometimes more than one at a time) that he was required to perform; on top of frequent changes of time signature and the movements which had to be performed precisely within the motion tracking-spaces, Desmons was also required to manipulate the miniature bells [Media 5]. Second, given that such a form of interacting with electronics was new for the conductor, rehearsing in this way allowed him to familiarise himself with the behaviour of the electronics relative to his input. In the same way, the CMD was able to adapt the setup according to the circumstances in order to make conducting the work as simple as possible. In order to rehearse in the absence of musicians, the digital score was played back with virtual instruments. Additionally, a metronome track was added to clarify time and tempo changes.

Media 4. Marc Desmons rehearses while also triggering real-time effects (IRCAM—Studio 5, 12 November, 2014). A MIDI version of the score was played back with an added metronome track. At the end of this passage, the composer and the conductor discuss the problem of how to handle page turns without interfering with the motion tracking [© Arch. Ircam – Bacot-Féron].

Media 5. By entering the motion-tracking spaces, the miniature bells trigger samples of the resonances of prepared notes on a piano (IRCAM—Studio 5, 12 November, 2014) [© Arch. Ircam – Bacot-Féron].

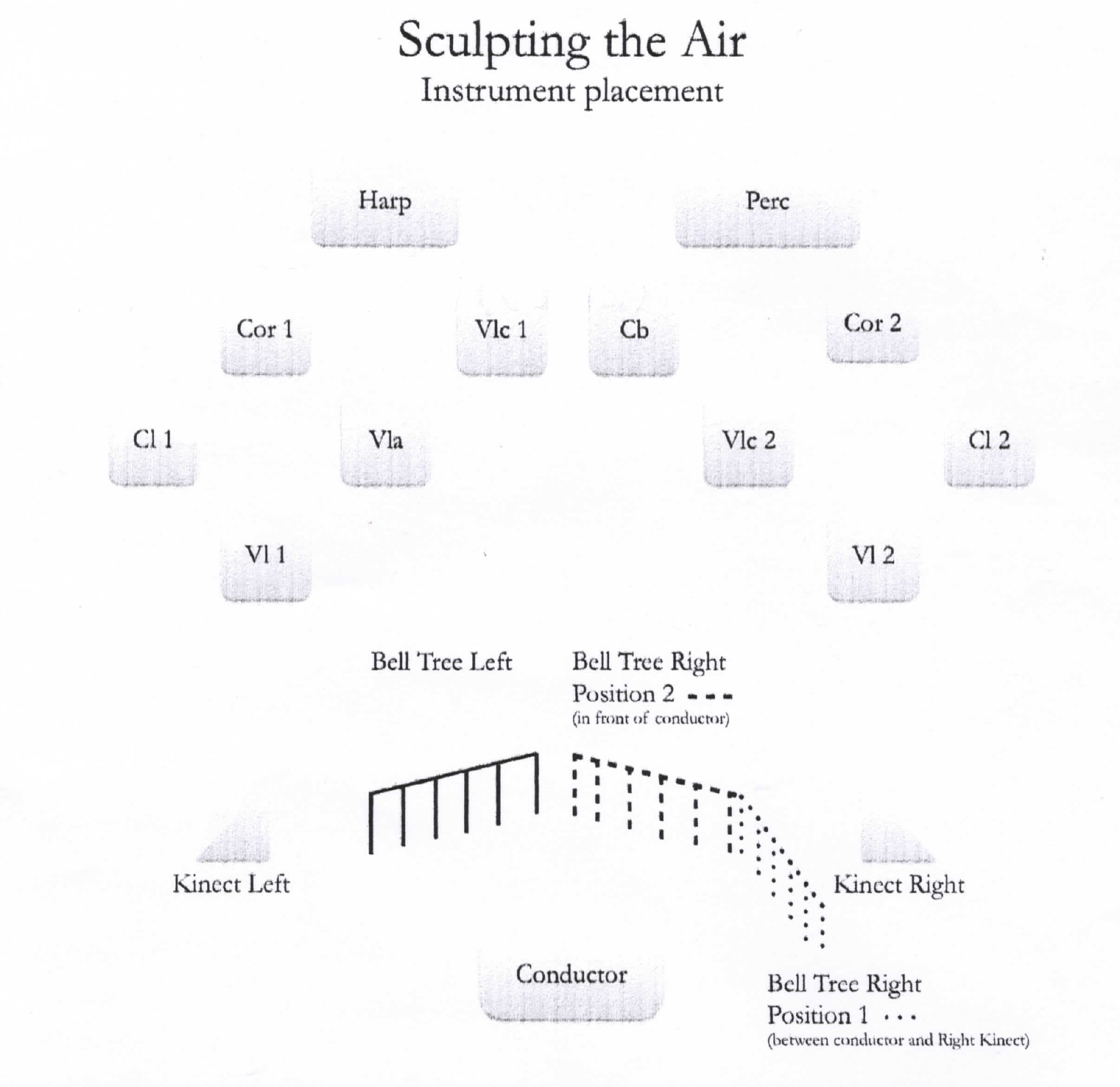

Final Setup

In this work, the musicians are divided into two groups placed in front of the conductor, on the left and right sides of the stage, respectively. The former is made up of clarinet in Bb, horn in F, harp, violin, viola and cello, and the latter of clarinet in Bb (doubling bass clarinet), horn in F, violin, cello, contrabass and percussion (vibraphone, snare drum and bass drum prepared with three metal rods attached to strings tied to the outer edges of the instrument, creating a buzzing sound when the drum is struck). The symmetrical nature of this division of the ensemble is reinforced by the electronics setup; the conductor has in front of him, to the left and the right, a Kinect device and a stand from which miniature bells are suspended [Figure 4].

Figure 4. Stage layout [© Part. Peters, 2015].

stand on the left is not moved throughout the piece; the bells are suspended at the same height, and can swing parallel to the conductor’s shoulders. In the second part of the work, the conductor sets these bells in motion, such that they bump into each other, causing them to resonate. The stand on the right, in contrast, is mobile. In the first part of the piece, the bells on this stand are not suspended at the same heights, and are oriented at a 45° angle relative to the conductor’s shoulders, in the motion-tracking space of the Kinect on the right side of the stage [Figure 5a]. The conductor is required to set them in motion or then stop them—taking care to prevent them from bumping against each other unintentionally—in order that they occasionally enter, at the point of maximal displacement, the motion-tracking space and in so doing trigger the playback of sound files. In an electroacoustic intermezzo which separates the two main sections of the work, the conductor is required to re-align vertically the bells on the right side of the stage, positioning them directly in front of him in such a way that they form, along with those on the stand on the left, a “curtain” of bells [Figure 5b]. Subsequently, conducting movements are performed within this “curtain,” in such a way that the bells collide with each other, adding sustained, high-pitched sounds to the instrumental material.

Figure 5. Positions of the stands for suspended bells; Part I (a); and Part II (b) of the piece [© Arch. TM+].

The on-stage setup of the work comprises individual microphones for each instrument, the two Kinect devices, a computer screen with which the conductor can monitor his/her movements within the motion-tracking spaces, and audio foldback speakers, intended to improve the balance between the instrumental and electronic sound sources. Additional material was positioned around the CMD at the rear of the hall [Figure 3b], where the composer was also present; these items included: a MIDI controller with which the levels of the electronics could be adjusted and the playback of electroacoustic material could be triggered (as in the aforementioned central section); two tablets, each running Gestrument; and a computer running Ableton Live, MaxMSP and GestrumentKinectConverter, which outputs the audio signal to the loud-speakers in the hall. Virtual instruments (from the IRCAM Solo Instruments sound bank) were used via the UVI workstation, i.e., a dedicated playback tool. In addition to the front, stereo speakers, real-time treatments and virtual instruments are also diffused via an array of eight loud-speakers placed around the hall, creating a much more dynamic sound environment. There is also the option of adding four supplementary loud-speakers to the setup to enhance the immersive quality of the electronic sounds.

Notation through an organological lens

In this work, the instrumental notation is entirely “standard,” with the exception of its use in the first movement of “boxes” in which motifs are notated which must be repeated at a given tempo or with an accelerando over a specified duration. In such cases, the conductor is able to focus somewhat less attention on interactions with performers and more on manipulating the electronics. The electronics (controlled by the CMD), i.e., starting playback of sound files, activating/deactivating effects, etc., are notated on a separate staff at the bottom of the score. Finally, at the top of the score, two additional staves serve to indicate the movements and actions to be undertaken by the conductor (top staff for the right hand, lower one for the left) [Figure 6].

Figure 6. Sculpting the Air, bb.106-110 [© Peters, 2015].

The novel nature of this work compelled the composer to enter into discussions with the conductor about the best approach to its notation. The adopted gestural notation may appear less detailed than that used in other contemporary works; this is because the composer opted to keep the physical gestures as simple as possible, and encouraged the conductor to follow his intuition in terms of controlling the electronics, while nonetheless respecting the indications included in the score.

Regarding the use of the suspended bells, two notational approaches were applied. When they are used as pendula (to trigger sound files), Nordin made use of an expanded staff (six lines) whereby each line represents one bell [Figure 7]. Tied notes indicate the periodic triggering of sound files, whereas isolated noteheads indicate that the motion of the corresponding bell should be stopped after a single oscillation.

Figure 7. Sculpting the Air, b. 1 [© Peters, 2015]. Expanded staff with six lines; noteheads represent the triggering of sound files by the pendular motion of the suspended bells.

In instances in which the bells are used in a “traditional” manner, Nordin applied a simple form of graphic notation: five small, parallel, vertical strokes enclosed in a box represent the strings to which the bells are attached. Above each box, an acronym provides information about the intensity (and hence, the resulting volume) with which the strings should be manipulated: “NSA”—“not so active;” “A”—“active;” and—“VA”—“very active” [Figure 8].

Figure 8. Sculpting the Air, b. 128 [© Peters, 2015]. Boxes indicating the actions to be performed by the conductor to cause the bells to strike against each another.

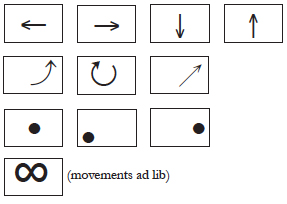

The various electronic treatments that will be presented in the following section are indicated in the score by name. The movements to be performed by the conductor to control them are notated inside boxes symbolising the two Kinect motion-tracking areas [Figure 9].

Figure 9. Examples of the notation used to indicate the types of movements/gestures to be performed by the conductor within the motion-tracking spaces. Arrows indicate the direction of movements; dots signify that the conductor must remain immobile; and the infinity symbol indicates ad libitum movements.

These notations are only general indications; the conductor is encouraged to let his/her ear be the ultimate guide. The durations of these gestures are generally indicated by horizontal lines or, in cases whereby an action continues for an extended period, by arrows [Figure 10a]. Nordin also occasionally applied traditional note durations when a fixed rhythm is required [Figure 10b].

Figure 10. Sculpting the Air, bb. 101–105 and bb. 163–166 [© Peters, 2015], gestural notation for the conductor: a) Anti-clockwise, circular motion with the left hand; b) Linear movements with both hands on beats 3, 4, 1 and 2.

Electronics

The electronics comprise pre-recorded material as well as real-time treatments controlled by the conductor through his/her movements in the motion-tracking space.

Pre-recorded material

As mentioned above, the suspended bells, when used as pendula, periodically trigger the playback of sounds files, chosen at random from a reservoir of samples that was created by the composer for a previous work (Aftermath (2010)), comprising distorted resonances of prepared notes on a piano. Achieving this was not as straight-forward as one might imagine; the conductor has to continue to indicate the pulse while inducing or stopping the pendular movement of the bells. If the movement is overly exaggerated, the bells swing upward, out of the motion-tracking space before returning to it on their downward journey, thereby triggering two samples instead of one.

Media 6. Marc Desmons rehearsing (alone) a passage in which he is required to conduct the musicians while also setting in motion/stopping, with a high level of precision, the suspended bells, which trigger the playback of sound files (IRCAM, Espace de projection, 2 March, 2015) [© Arch. Ircam – Bacot-Féron].

Short fixed-media passages, created in the studio, serve as intermezzi between the various sections of the work. Generally, these are made up of several sound files, triggered by the CMD, which merge into each other. A long fixed-media intermezzo separates the two main parts of the work; its presence achieves a clear break between the contrasting first and second parts. It also provides the conductor with time to reposition the suspended bells on the right side of the stage (which he is required to do in the dark), such that in the second part of the piece, they are in line with those on the left, creating a “curtain” of bells.

Real-time Treatments

In this work, real-time treatments can be categorised into two groups: (i) three effects which are applied directly to the instrumental material performed by the ensemble, i.e., looping, delays and freezes; and (ii) the use of virtual instruments in Gestrument. The latter is controlled by the movements of the conductor, which are converted into MIDI data via GestrumentKinectConverter.

Looping

A looping effect is activated when the conductor’s hand enters the motion-tracking space. In more detail, first, the material being performed by the ensemble is recorded. When the conductor removes his/her hand, the recording stops and the recorded fragment is played back in a loop via the loud-speakers. This continues until the recording of a new loop is triggered [Media 7].

Media 7. Marc Desmons rehearsing (with the ensemble) a passage in which he triggers a looping effect (IRCAM—Studio 5, 23 February, 2015) [© Arch. IRCAM – Bacot-Féron].

The fragment to be looped may also be altered by the movements of the conductor’s hand within the motion-tracking space. Based on the position of his/her hand, the balance of the various instruments (relative to each other) may be altered while the recording is being made. The central point corresponds to unity among all instruments (which may or may not be playing at the same volume as each other), while the outer areas are assigned to individual instruments. As such, based on the position/motion of the conductor’s hand, the signal from a given instrument can be amplified or attenuated. In other words, the motion-tracking space represents a sort of “instrumental cartography” whereby the intensity of the signal of each instrument can be raised or lowered. Upon removing the hand from the tracking space, the new recording, with intensity characteristics based on this process, is played back in a loop.

Delays

The second real-time treatment is a delay which is applied to input from the instrumentalists. It operates in a manner similar to the loop, i.e., the presence of the conductor’s hand in the motion-tracking space triggers the effect, while removing the hand deactivates it. The balance of the various instruments can be controlled by movements of the hand. However, it differs from the looping process in two respects. First, playback of the recording is not looped; rather, the delays interrupt each other when a certain number of recorded samples, pre-defined by the composer, are being played back simultaneously. Second, each instrument has its own delay duration, from one to eight seconds, creating a phasing effect among the various delayed instrumental lines.

Freezes

The third real-time process is a “freeze” effect, i.e., the momentary texture being played by the instrumentalists at a given instant is sustained. This is achieved using granular synthesis: a very short extract of instrumental material is recorded, i.e., a few dozen milliseconds, and then played back in a loop. Using an amplitude envelope, it is possible to mask the clicks that would occur with each loop in the playback. The result is such that the listener has the impression that the sound has become “frozen” in time. Once activated, the process then mutes the incoming signal from the instrumentalists (without which the effect would be far less convincing). Once again, the conductor has the ability to manipulate the balance of the instruments by moving his/her hand within the motion-tracking space [Media 8].

Media 8. Marc Desmons rehearsing (with part of the ensemble) a passage featuring the “freeze” effect applied to the input from the instrument microphones (IRCAM – Studio 5, 23 February, 2015) [© Arch. Ircam – Bacot-Féron].

Virtual instruments

The MIDI data generated by the physical movements of the conductor in the motion-tracking space are transmitted to a UVI Workstation which activates/deactivates the virtual instruments of the “IRCAM Solo Instruments” sound bank. This comprises a literal “translation” of the Gestrument workspace, whereby output is based not on movements within the two-dimensional space of the tactile screen, but rather, in the three-dimensional motion-tracking area [Media 9]. The number of activated pixels relative to the “depth” of the movement within the motion-tracking area defines the “pulse density” in Gestrument, i.e., the greater the number of activated pixels, the more pitches are played. In keeping with the standard configuration of Gestrument, movement in the lower region of the motion-tracking area results in the playback of lower pitches, while movement in the higher region plays back higher ones. Similarly, when the conductor’s hands are close together or positioned towards the musicians, playback durations are short; conversely, when the hands are further apart or positioned closer to the audience, longer durations result. The reservoir of pitches is based on a microtonal scale derived from Swedish folk music, which was formalised in ScaleGen. The spatial diffusion of the virtual instruments via the loud-speakers is intended to simulate the positions of the corresponding on-stage acoustic instruments, in order to create a kind of hidden, “duplicate” ensemble.

Media 9. Marc Desmons (alone) rehearsing a passage in which virtual instruments controlled by Gestrument overlap with acoustic instrumental material (in MIDI format). The computer monitors in front of Jesper Nordin and Manuel Poletti are displaying the motion-tracking areas of the left and right hands of the conductor, respectively (IRCAM, 12 November, 2014) [© Arch. Ircam – Bacot-Féron].

Structure of the Piece

Sculpting the Air is made up of two main contrasting sections, each of which may by divided into sub-sections in which the parameters of different real-time effects are varied via the physical movements of the conductor [Figure 11].

Figure 11. Motions performed by the left (MG) and right (MD) hands of the conductor to control the electronics and/or activate the suspended bells. On occasion, effects applied in one section carry over into the following one, e.g., the triggering of sound file playback via the pendular motion (mvt) of the bells.

Part I

Section I-0 (b. 1)

At the beginning of the piece, the stage is in darkness; as such the audience cannot see that the conductor is silently causing the suspended bells to oscillate back and forth in a pendular motion. When a bell swings into the motion-tracking space, it triggers the playback of a sample, chosen at random from a reservoir of some 20 samples taken from Aftermath (2010) for saxophone quartet and electronics [Media 10]. Collectively, the motion of the bells generates complex rhythms which evolve via interactions with each other and through the gradually decreasing amplitude in the motion of each individual bell; the effect is reminiscent of Pendulum Music by Steve Reich. In beginning the work in this way, the composer presents to the audience the key principle of the electronic setup: interactive, immaterial control of the electronic sounds.

Média 10. Sculpting the Air, opening measure (Maison de la Musique, Nanterre, 13 June, 2015) [© Arch. TM+, 2015].

Section I-1 (bb. 2-48)

While the sound files continue to play back periodically according to the motion of the bells, the musicians begin to play short, rapid, erratic sequences which are separated by silence. The conductor is required to alternate showing the pulse with the left had and the right, as the latter is also used to set in motion or immobilise the bells, while the former also controls the looping effect applied to fragments of instrumental material [Media 11]. Looping is triggered on five occasions in this section. The composer notates this in the score using brackets. As the final instrumental motif of this section slowly fades, three sound files emerge (based on material recorded with the assistance of Florent Jodelet [Figure 2d]), each lasting some 30 seconds, collectively forming a sonic continuum.

Media 11. Sculpting the Air, bb. 18-25 [© Arch. TM+, 2015].

Section I-2 (bb. 49–77)

The aforementioned “erratic” instrumental motifs return at the beginning of this section, albeit now without the addition of electronic processing; however, here, the “looping” is notated in the instrumental material, i.e., the instrumentalists repeat short fragments which, in combination, give rise to a complex polyphonic texture. While these instrumental loops are being performed, the conductor stops beating, leaving both hands free to once again set in motion the suspended bells, thereby triggering the sound files from the work’s opening [Media 12]. As this section progresses, the instrumentalists, independently of each other, perform a decrescendo, such that the various looped motifs gradually blur together.

Média 12. Sculpting the Air, bb. 69–77 [© Arch. TM+, 2015].

Section I-3 (bb. 78–114)

Here, while the suspended bells continue to trigger the playback of samples, the ensemble performs a four-voice canon whereby each voice comprises two instruments (b. 78: clarinet 1 + violin 1; b. 81: clarinet 2 + violin 2; b. 83: viola + clarinet 2; b. 84: clarinet 2 + contrabass). At the same time, the conductor activates the delay effect with his/her left hand (eight times in total), thereby increasing the density of the polyphonic texture [Media 13]. Starting in b. 99, Nordin introduces new, repetitive motifs, once again allowing the conductor to focus on controlling the delays.

Media 13. Sculpting the Air, bb. 78-94 [© Arch. TM+, 2015].

Section I-4 (bb. 115-126)

The beginning of this section corresponds to the climax of the piece. Each musician performs motifs made up of saturated sounds, played at a rapid tempo (♩=204) and an extremely loud dynamic (fff or fff). At the same time, the conductor applies several delay lines, further increasing the sonic intensity. Starting in b. 119, the instrumentalists begin to leave space between each repetition, thereby decreasing the density of the material and making room for the sound files triggered by the suspended bells to be heard. Here, the electronic samples are no longer the saturated piano sounds from the opening section, but rather, instrumental samples taken from the Vienna Symphonic Library (VSL) sound bank [Media 14]. This transition takes place gradually, according to a tempo chosen by the conductor based upon the cyclical motion of the suspended bells (i.e., ♩=116-144). The ensemble then repeats a chord several times, on the first beat of each bar, progressively getting louder (pppp to mp). In b. 125, the conductor stops beating but indicates to the musicians to slow the tempo and play less loudly, resulting in the disintegration of the repeated chord and leaving space for the emergence of the electroacoustic intermezzo.

Media 14. Sculpting the Air, bb. 115-122 [© Arch. TM+, 2015].

Part II

Section II-0 (bb. 127-128)

This electroacoustic intermezzo, played back in darkness, was created using sounds recorded in a work session with Jodelet, as well as the compressed/stretched piano resonance sounds which featured in Section I. During this transitional section, the conductor repositions the stand holding the bells on the right side of the stage, bringing it into a straight line with the second (left) stand, and in so doing, creating a “curtain” of suspended bells. In order for the bells to all be at the same height, which is necessary for them to bump into each other, the conductor is required to carefully adjust the length of string attaching each bell to the stand. At the conclusion of this intermezzo, the conductor agitates the bells, allowing them to bump against one another with three distinct degrees of force, defined by the composer as NSA, A, VA [Media 15].

Media 15. Sculpting the Air, beginning of b. 128 [© Arch. TM+, 2015].

Section II-1 (bb. 129-157)

Part II of Sculpting the Air is more subdued and melodic in character; a much slower tempo is used (♩= 69). Initially, two musicians (violin I and clarinet I) perform melodic fragments constructed from the aforementioned microtonal folk scale, with the other instruments joining in one by one. The conductor beats with the right hand while playing the suspended bells with the left. No electronic treatments are applied in this section [Media 16].

Media 16. Sculpting the Air, bb. 129-143 [© Arch. TM+, 2015].

Section II-2 (mm. 158–211)

Here, the role of the conductor becomes increasingly complex. He/she is required to indicate the beat and set in motion the suspended bells, but also to trigger the virtual instruments (UVI sound banks in Gestrument) via movements in the motion-tracking space. The reservoir of pitches is based on the same microtonal scale used in the instrumental material. The virtual instruments are “active” across the entire motion-tracking space. Thus, the conductor is required to improvise a “gestural cadence” (bb. 171–178)—as it came to be described during rehearsals—over a pedal tone played by the harp, coupled with a tremolo played on several percussions instruments [Media 17]. This sparse acoustic instrumental texture allows the audience to clearly hear the material played back by the virtual, “duplicate” ensemble (the instrumentation of which matches that of the performers on stage). In this section, Gestrument operates according to its standard settings, i.e., rhythms become denser when the hand is close to the centre of the tracking space, and sparser when towards the exterior; higher pitches occur when the hands are raised upwards and deeper ones when they are lower; and finally, as mentioned previously, with increased gestural depth, a larger number of pixels are activated, causing more pitches to sound.

Media 17. Sculpting the Air, bb. 167-184 [© Arch. TM+, 2015].

Section II-3 (bb. 212-265)

In the concluding section (with an even slower tempo, i.e., ♩=58), the instrumental material grows progressively more rarefied and caesura become more frequent. These gaps in the instrumental material are partially filled with “frozen” sounds, triggered by the conductor on twelve occasions. In such cases, the conductor should attempt to capture and sustain a specific pitch or chord played by the ensemble. Once this effect has been activated, the conductor can control the instrumental balance by moving his/her hands within the motion-tracking space.

Media 18. Sculpting the Air, bb. 212-224 [© Arch. TM+, 2015].

Conclusion

Sculpting the Air is the fruit of close collaboration among the composer, the CMD and the conductor. Wishing to document the evolution of this important project, the process of its creation was meticulously recorded. Progress in terms of defining the technological setup—from decisions regarding the use and placement of motion-tracking devices to the determination of the parameters defining the electronic material (thresholds, filters, envelopes, timbres, etc.)—largely occurred during the testing phase and through numerous discussions between Nordin, Poletti and Desmons. This collaborative approach regarding the development/use of ad hoc technology and the factors defining the modes of interactions with the electronics deeply influenced the compositional and performance processes. Thus, this project may be seen as the result of constant interaction regarding compositional ideas, technological tools and approaches to conducting, which the analytical method applied in this paper has attempted to present in detail.

Sculpting the Air is the first part of a trilogy, yet to be completed, whose focus is on the use of Gestrument, developed by Nordin himself to address his own distinct compositional priorities. As the composer emphasises, this tool “minimises and simplifies information, while also allowing the user to control the resulting exformation with a high degree of precision” [Szpirglas, 2020]. Thus, it makes it possible to explore the concept of exformation, which manifests itself metaphorically in this tryptic through the use of electronics. Through the use of real-time signal processing, a new layer of meaning is superimposed upon the instrumental material, in the form of echoes and reminiscences. Part 2 of the trilogy, Visual Exformation (2016) for string quartet, real-time electronics and interactive light sculpture, was premiered at the Musica Festival in Strasbourg on 4 October, 2016. Performed by the Diotima Quartet, the work was also created with the assistance of Emmanuel Poletti, as well as Ramy Fischler and Cyril Teste (interactive installation), and Thomas Goepfer (CMD).

The technical setup for Sculpting the Air was re-used in subsequent works by Nordin, such as Emerge (2017) for clarinet, orchestra and Gestrument, and Emerging from Currents and Waves (2018) for clarinet and conductor (as soloists), orchestra, electronics and interactive visual installation.

Sculpting the Air was re-performed in 2020 by Ensemble Intercontemporain, conducted by Lin Liao. Owing to its playful and relatively accessible nature, the technology applied in the work aroused considerable interest among the ensemble members, encouraging the composer to continue his research into physical gestures (without necessarily seeking to “develop new gestural languages”) [Szpirglas, 2020]. Sculpting the Air represents both a decisive step and the opening of new compositional horizons for Jesper Nordin

Resources

Texts

[Bacot et Féron, 2016] – Baptiste Bacot and François-Xavier Féron, “The creative process of Sculpting the Air by Jesper Nordin. Conceiving and performing a concerto for conductor with live electronics”, Contemporary Music Review, vol. 35 No. 4–5 : “Gesture-Technology Interaction in Contemporary Music”, 2016.

[Nordin, 2015] – Jesper Nordin, “Sculpting the Air – Notice de concert”, ManiFeste 2015. (online: https://brahms.ircam.fr/works/work/36339/#program).

[Nørretranders, 1998] – Tor Nørretranders, The User Illusion: Cutting Consciousness Down to Size, English translation by Jonathan Sydenham, London: Penguin Books, 1998.

[Szpirglas, 2020] – Jérémie Szpirglas, “‘Sculpter l’air’. Entretien avec Jesper Nordin, compositeur”, 30 January, 2020 (online: https://www.ensembleintercontemporain.com/fr/2020/01/sculpter-lair-entretien-avec-jesper-nordin-compositeur/).

[Zattra et Donin, 2016] Laura Zattra et Nicolas Donin, “A questionnaire-based investigation of the skills and roles of Computer Music Designers”, Musicae Scientiae, vol.20 No. 3, p.436-456, 2016.

Archives

[Arch. TM+] – Enregistrement vidéo de la création de Sculpting the Air par l’ensemble TM+ sous la direction de Marc Desmons, 13 june 2015, Maison de la musique de Nanterre.

[Arch. Ircam – Bacot-Féron] – Corpus of data (writings, audio, video) created in 2014 and 2015 by the authors during the production-phased of Sculpting the Air. This research took place within the framework of the ANR GEMME project—Musical Gestures: models and experiments (2012–2016).

[Arch. Ircam – Production] – Jesper Nordin, “Projet Nordin STA TM + présentation”, 2014.

[Arch. Ircam – Ressources]

Jesper Nordin, Sculpting the Air, 13 June, 2015, Maison de la musique de Nanterre

Ensemble TM+/Marc Desmons (direction), Manuel Poletti (RIM) (online:

https://medias.ircam.fr/xb4904f [concert], https://medias.ircam.fr/xb68755 [general]).

Jesper Nordin, Sculpting the Air, 7 février 2020, Cité de la musique

Ensemble intercontemporain / Lin Liao (direction), Manuel Poletti (Computer Music Designer) (online: https://medias.ircam.fr/xe75995 [concert]).

“Sculpting the Air: a conversation with Jesper Nordin and Marc Desmons on building and performing gestural instruments”, journée d’étude Inventing Gestures: New Approaches to Movement, Technology and the Body in Contemporary Composition and Performance, 8 june 2015, IRCAM (online: https://medias.ircam.fr/xe6976a).

Partition

[Part. Peters, 2015] – Jesper Nordin, Sculpting the Air, Henry Litolff’s Verlag C. F. PETERS Leipzig London New York, EP 14106, 2015.

Enregistrements vidéo

[EV Grame, 2011] – “wacom improvising”, video made available online on 20 May, 2011, on the Grame Vimeo channel: https://vimeo.com/24002171.

[EV Gestrument, 2014] – “GESTRUMENT - The Revolutionary Gesture Instrument for iOS”, vidéo mise en ligne le 24 février 2014 sur le compte Youtube de Gestrument (en ligne : https://youtu.be/J97Ao_3DgQQ).

[EV ScaleGen, 2014] – “ScaleGen”, vidéo mise en ligne le 22 septembre 2014 sur le compte Youtube de Gestrument (en ligne : https://youtu.be/7z3oWqH3EfM).

Remerciements et citation

The authors wish to extend warm thanks to Jesper Nordin, Marc Desmons, Manuel Poletti and Martin Antiphon. Thanks also to the musicians Ensemble TM+ and to the entire Technical Team for (among other things) their video recording of the premiere performance of the piece.

To cite this article:

Baptiste Bacot and François-Xavier Féron, “Jesper Nordin – Sculpting the Air”, ANALYSES – Œuvres commentées du répertoire de l’Ircam [online], 2021. URL : http://brahms.ircam.fr/analyses/SculptingTheAir/.

Do you notice a mistake?