Analysis of Songes (1979) by Jean-Claude Risset

Introduction

Summary

Songes (1979) by Jean-Claude Risset is emblematic of the early years of production at IRCAM. It is a work for fixed media on tape in which the composer combined syntheses generated using computers with treated instrumental samples. The present analysis provides an in-depth look at the work’s genetic makeup, i.e., the original codes used in its creation, written in the Music V programming language. It is an “interactive” analysis in the sense that you, the reader, are invited—thanks to the Faust web-audio programming language—to experiment with Risset’s code, to modify the settings and to make use of it yourself.

After having briefly discussed the scientific and musical background of the composer, we will present a detailed analysis of Songes. Notions which were recurrent throughout Risset’s creative career will be emphasized in this analysis, e.g., simulacra of bells, the illusion of space, connections with the field of psychoacoustics, etc. In addition to these key concepts, aspects which are unique to Songes will be discussed, such as the intervallic relationships and timbral space which characterise the work. Numerous animations and musical examples will accompany the reader throughout this interactive presentation.

Risset, composer/researcher

Jean-Claude Risset was born on 13 March, 1938 in Puy en Velay. He died on 21 November, 2016 in Marseille. In the early-1960s, after having graduated with a physics degree from the Paris Ecole Normale Supérieure, he undertook research which would fuse his scientific knowledge with his aspirations as a composer. This represented his first steps towards merging art and science; in this respect, he was aided by new technologies which were emerging at that time. Risset’s interest in music became manifest at an early age. Influenced by his parents, who were music lovers and who had a piano at home, Risset took his first private music lessons at the age of six. As a teenager, his professor (Robert Trimaille, a former student of Alfred Cortot) encouraged him to play the works of Debussy, among others [EV Dars and Papillault, 1999], emphasizing the importance of focusing on phrasing and timbre. For Risset, listening to the “interior of sound” consisted of experimenting with adjusting the intensity of individual notes within a chord [Veitl, 2010, p.156]. This line of thinking, which largely resulted from his study of the piano, led Risset to conceive of sound as an acoustic phenomenon. Later, while undertaking studies of music and science, Risset embarked upon a long period of artistic research exploring the interiors of sonic objects.

In the beginning, my scientific and musical education were completely separate. While preparing for the entrance exams for the best universities, I refused to stop practicing the piano, not because I thought then of exploring the synthesis between music and science, but because I was passionate about it, and it was a perfect diversion, a completely different activity which compensated, to some extent, for the dryness of studies of the sciences [Guillot, 2008, p. 27]

His research into the “composition of sound itself,” which presaged similar lines of thinking some years later among practitioners of spectral techniques, seems to find its origins in a meeting in 1962 with André Jolivet at the Centre Français d’Humanisme Musical Summer Academy at Aix-en-Provence. Jolivet professed that, following a meeting with Edgard Varèse, he had begun to compose with sounds as well as with notes. As such, one may trace a line from Varèse via Jolivet to Risset in terms of both the relationship between notes and sound and the proclivity to explore the interior of sounds themselves—the latter notion was also significant in the work of British composer Jonathan Harvey, as well as that of the so-called spectralist composers. Following Risset’s meeting with Jolivet, a new orchestral work was composed and premiered in 1963 by the Marseille Orchestra. The young Risset went on to study music theory with Suzanne Demarquez and composition/orchestration with Jolivet.

Risset was also interested in technological innovations related to sound, and discovered a publication by Max Matthews on potential musical applications of computers [Mathews, 1963]. In line with his two spheres of interest, he went to work alongside John Chowning, the father of digital sound synthesis, at the Bell Telephone Laboratories in 1964 [EV Risset, 2016], where he undertook research on the synthesis of brass instruments, the early results of which were the focus of his doctoral thesis. Subsequently, Risset became more interested in musical creation as a means of unifying the fields of art, science and technology.

My scientific work, which began with nuclear physics, was directed towards work in the fields of acoustics and computer music, as well as study of auditory cognition. The vast majority of my efforts in this regard were strongly motivated by music; sometimes, music provided an opportunity, a context for purely scientific investigation [Risset, 1991, p. 274]

In 1968–69, Risset composed two substantial works using digital synthesis, Computer Suite from Little Boy (1968) and Mutations (1969), the latter of which was commissioned by Ina-GRM. In these pieces, the composer created sounds which were quasi-instrumental, and arpeggios which defined harmonies, as well as paradoxical sounds resulting from his scientific research [Risset, 1978a; 1978b; 1986; 1989]. As a side note, these two works used sounds which were included in a library of syntheses, created by Risset in 1969 at the behest of John Chowning for his class at Stanford University introducing students to digital synthesis. This historical library contains the outcomes of Risset’s synthesis experiments from his time at the Bell Telephone Laboratories [EA CD Wergo, 1995]. Risset would continue work in this field, culminating in the archetypal “The composition of sound itself” in 1986 (“la composition du son-lui même” [Risset, 1986; Risset, 1990]). His research led to the introduction of revolutionary concepts in the fields of digital synthesis and psychoacoustics, and more broadly, also made valuable contributions to art, science and technology.

Compositional Context

Upon returning to France in 1975, Risset joined the Project Team at IRCAM at the behest of Pierre Boulez. While there, he composed the mixed-music work, Inharmonique (1977), and the acousmatic Songes (1979), the latter of which may be seen as a manifestation of his desire to wed the richness and variety of pre-recorded sounds with the precise control of electronic music afforded by the use of digital technology [Arch. PRISM – Risset]. Songes represents the apogee of Risset’s rich and multifaceted compositional language, which had been refined through the creation of instrumental works such as Fantaisie (1963) for orchestra, computer works such as Computer Suite for Little Boy (1968) and Mutations (1969), and mixed-music works such as Dialogues (1975), Inharmonique (1977), Trois moments newtoniens (1977) and Mirages (1978) for 10 to 25 performers and fixed media. In Songes, recent advances in computer music made it possible to incorporate syntheses from Mirages into his library of objets sonores, giving rise to a distinct, novel form of musical unity. During this period, Risset was an advocate of the use of “deffered time” technology.

It is difficult to confer flexibility, life, and identity upon synthetic sound. Yet, to attempt to do so is important, because synthesis opens up so many possibilities. The analyses/syntheses required to internally modify acoustic sounds remain problematic, especially in real time. Therefore, the creation of quasi-instrumental syntheses is perhaps a more promising field of exploration; in any case, it is easier with the technology we have today [Risset, letter to Pierre Boulez, 29 September, 1979, Arch. PRISM – Risset].

It is necessary to view this quotation in its historical context, whereby the gap between real- and deferred-time processes was significant. Nonetheless, the basic objective of Songes was to create something which was entirely novel, as well as to manipulate sonic identities, to leap from a mere sound into the most infinitesimally refined, oneiric dimensions, or in other words, to go from “sounds to dreams” [Guillot, 2008]. Through his dual professional activities, Risset’s work represented a veritable dialogue between the theoretical sciences and composition, achieving a sort of positive feedback-loop between the two [Risset, 1990; 1991]. Songes, in the pivotal year that was 1979 (with a change of departmental directors at IRCAM), is emblematic of this fruitful merging of previously disconnected disciplines. “The composition of sound itself” was, for Risset, a means of contributing to research in the field of psychoacoustics on “timbral space.” Such research also included the development of techniques and notions related to computer music, such as frequency contours, the illusion of space and phasing; these elements constitute the foundations of this, one of Risset’s landmark works, and one which marks the end of his first major creative period (1965-1979) and the beginning of his second (1980-1991).

Methodological Approach

While Songes has been widely discussed in numerous texts, articles and interviews, these resources have focused largely on poetic aspects of the work, and do not go into detail regarding the composer’s use of technology and the compositional choices he made on that basis. Paradoxically, given the extent of the interest that this piece has aroused in the computer music scientific/artistic community, to date, only one very short analysis of it has been published [Koblyakov, 1984]; another planned analysis was abandoned [Stroppa, 1984]; and just one university study has seen publication [Rix, 2012]. It is worth noting that a related work, Contours (1981), has been thoroughly analysed [Di Scipio, 2000; 2002]. As such, in the absence of substantial academic research, in this paper, we draw upon available archival and sketch material for Songes. Conscious of the difficulties of analysing “works whose production is based on computer technology” [Risset, 2001], the composer seems to have been careful to document his creative process in this piece and others. Thus, the original codes created for Songes have been preserved, as have compositional schematics and sketches. Access to these archives allowed us to analyse the work not merely by studying stereophonic and quadrophonic recordings. On the basis of the original Music V codes used for Songes, as well as those for Inharmonique (made available by Denis Lorrain [1980]), we propose an analysis focused not only on style and aesthetics, but also on technique (in the sense of the “techniques of contemporary composition”) in order to better understand the way in which art, science and technology co-exist and inform one another. Finally, it should be noted that this analysis is partly based on João Svidzinski’s thesis which sought to establish a methodology by which to model works of computer music [Svidzinski, 2018] in order to position them within an artistic setting, following an epistemological approach similar to that of Lorrain.

Presentation

Tools and Materials

Songes was composed in 1978–79 at IRCAM.

Hardware :

- PDP10 Computer (IRCAM).

Software :

- Sound synthesis via coding in the Music V environment.

- Software sketches (which were ultimately abandoned).

Materials:

- Samples of instrumental motifs recorded at IRCAM, also used in the mixed-music work, Mirages (1978).

- Original samples of instrumental motifs recorded at IRCAM (harp, trombone, flute, oboe, clarinet, horn, violin, viola and cello).

Stereophonic and quadriphonic versions

Songes is a work for fixed media which exists in two versions:

- A quadriphonic version intended to be played back in a concert setting in order to maximise the effect of the auditory spatial illusions in the work [EA Unpublished Ina].

- A stereophonic version representing a two-channel reduction, produced for the commercial distribution of the work [EA CD Wergo, 1988].

Use of the stereophonic version in a concert setting is discouraged due to potential discrepancies that could arise between the “internal space,” as delineated by the composer via coding, and the “external space” which results from multi-planar diffusion via an acousmonium [Chion, 1988]. The four-channel version, comprising four audio files in aiff format, is therefore the standard for public performances on a four (or more) channel setup (although other possibilities have been proposed by Svidzinski [2017]). Below are some of Risset’s recommendations (verbal citations or taken from his archives) for the performance of the work:

… as an addendum on the spatialisation: initially frontal from 5’ and notably at 7’11, the four loud-speakers should be perfectly balanced; the illusionary movements must submerge and skim around the listeners [Risset, excerpt from a letter to Marco Stroppa, 5 July, 1984, Arch. PRISM – Risset]

For the diffusion: the level at the beginning (0 to 1’30) should be such that the instrumental sounds are perceived as being realistic. The level should then be raised a little, without overdoing it (nonetheless, the crescendo at around 6’30 should be very strong) [Risset, addendum to the performance notes for Songes, Arch. PRISM – Risset]

Synopsis of the work

The title Songes [“dreams”] suggests an oneiric sound world, a gradual progression from an instrumental realm to an illusory one. “In Songes, I focused on the idea of a transition to a dream world; as the piece develops, the sonic elements gradually become less realistic and more oneiric. Not only do they evoke acoustic instruments less and less clearly, but they give the impression of breaking free of physical limitations” [Guillot, 2008, p. 128]. The work is divided into three sections, with the sub-titles “Mirages”, “Cloches” [“bells”] and “Coda”.

The first part, “Mirages,” corresponds to the “realistic” material which is progressively transformed to create an “illusory” sound world. The materials are derived from an eponymous, mixed-music work which Risset composed the previous year. In the present work, samples were digitised, mixed and transformed. Digital synthesis was then used to assemble, superimpose and layer five instrumental motives, each lasting 2 to 5 seconds. The instrumental material was recorded separately by musicians from Ensemble Intercontemporain. Modifications of the pitches of the recordings were made in Music V. Digital technology also made it possible to manipulate the spatial aspects of the recordings. Additionally, Risset used a “map-based” system in the “Esquisses” software (conceived by David Wessel and developed by Bennett Smith) to manipulate the qualities of various trills.

In the second part, “Cloches,” Risset used a number of synthesised sounds from the aforementioned library to create a passage featuring imaginary, mostly inharmonic bells. The spatialisation is marked by a “call-and-response” between the front and rear loud-speakers. The intervallic relationships of motifs used in Mirages are again applied here, both as a basis for the creation of harmonic material and to produce new sound objects. In this section, sound occupies the entire frequency spectrum, from low to high (in contrast to the first section, which is predominantly in the mid-register). The bell sounds then become fluid, with inharmonic sounds melting into each other, creating a pulsing texture akin to the chirping of a cicada whereby the transition from one grain to the next is built upon “the relationships of existing frequencies and their components; this accumulation constitutes a crescendo which overloads the sound with an abundance of frequencies, from low to high” [Risset, 1978c].

In the third part, “Coda,” the composer introduces a new element: a low pedal tone (Bb/58Hz) which is enriched by a phasing effect, creating a foundation above which high-pitched sounds swirl, seemingly flying off into space. Here, Risset made novel use of Fourier synthesis to compose contours of frequencies yielding naturalistic sounds. The morphology of this material is evocative of a kind of imaginary birdsong. In this section, Risset used modules developed by John Chowning to control spatial elements. Reverberation and phase-shifting (between the left and right channels) give the impression that the sound source is mobile. The following figures provide a visual synopsis of the work. Figure 1 is a listening score created by the composer, and Figure 2 is a temporal schematic, created by Florence Rix.

Figure 1. Listening score for Songes, created by Risset [© Arch. PRISM – Risset].

Figure 2. Temporal schematic for Songes [Rix, 2012, p. 10].

Compositional Techniques

While Songes demonstrates the continued exploration of material and concepts which were present in previous works, it also reveals entirely new preoccupations on the part of the composer, such as the wish to control interactions between syntheses and acoustic sounds using digital technology, an approach that he would go on to pursue in works such as Sud (1985) and Invisible (1996) [Risset, 1996]. In a letter to Boulez, Risset provided details of some of the compositional techniques he applied in the creation of Songes:

A) Simple treatments applied to instrumental sounds in Music V: mixing/superposition/frequency shits/spatialisation/chorus/use of envelopes.

B) Exploration of timbral space using Sketchpad (D. Wessel).

C) Simplification of complex frequency modulations.

D) Spectral and spatial modelling by extended phasing (i.e., the addition unevenly spaced sounds containing similar frequencies) [Risset, excerpt from a letter to Pierre Boulez, 29 September 1979, Arch. PRISM – Risset].

To these notions, we add three others:

E) Simulacra of bells.

F) Frequency contours.

G) Fluid textures, which make it possible to create a synthetic overview of the structure of the work [Figure 3] (more details below).

Figure 3. Compositional techniques applied in each part of Songes, with the corresponding time code relative to the stereophonic and quadrophonic versions.

The Music V Programming Language

Basic principles

Before going into further detail regarding the various aspects of Songes, it is necessary to present an overview of the syntax of the Music V programming language. Music V was released as part of the Music N software series, developed at the Bell Telephone Laboratories by Mathews and his team. These programmes use a modular syntax, i.e., modules (represented by alphanumeric symbols) undertake certain tasks such as the multiplication of two signals. The same approach has been used in other audio syntax software like Csound and Max, which may be seen as descendants of the Music N series. The logic of this language is analogous to the “traditional” way of making music; code can be divided into two basic parts, namely, the “orchestra” and the “score.” The former encompasses the global parameters (e.g., sampling rate) and the algorithmic instruments, while the latter deals with notes, i.e., temporal parametric instructions which invoke actions from the instruments in the orchestra. In brief, it is a digital entity that produces sound, thereby playing the role of an instrument in a traditional ensemble. In the “score,” a time script defines the parameters of (e.g., frequency, amplitude), and triggers events for (including the duration), each instrument.

Figure 4. . Example of a code snippet in Music V: (a) block diagram; (b) representation of an envelope; (c) waveform; (d) standard musical score; (e) Music V code [Mathews, 1969, p.54].

In the algorithm in Figure 4e, an instrument is constructed in lines 1 and 5; “INS 0 1” marks the beginning and “END” the conclusion. The instrument is then instructed to play a single note, the amplitude and frequency of which are controlled by two oscillators, “OSC.” Line 2 sets the amplitude and line 3 the frequency. Then, the output of the second oscillator is sent to the audio output (module “OUT”, line 4), thereby generating sound. The “GEN” module (lines 6 and 7) determines the waveforms (b and c) of the sound. Finally, the “NOT” module (lines 8 and 9) triggers output from instrument 1. Taking a closer look at line 8 (“NOT 0 1 2 1000 .0128 6.70”): “0” indicates that the process should be initialised at 0 seconds; “1” indicates that an instrument is defined in this line of code; “2” corresponds to a duration in seconds; “1000” is a relative amplitude; “.0128” is the frequency of the amplitude oscillator; and “6.70” is the frequency of the frequency oscillator. This code can be compiled in Music V [Figure 4e]. In order to visualise the output, a traditional score transcription of the result of this code is included [Figure 4d]. Additionally, the block diagram in Figure 4a visually depicts the various aforementioned modules, the inputs and outputs, and the flow of information within the instrumental system.

Illustration: the opening seconds of Songes

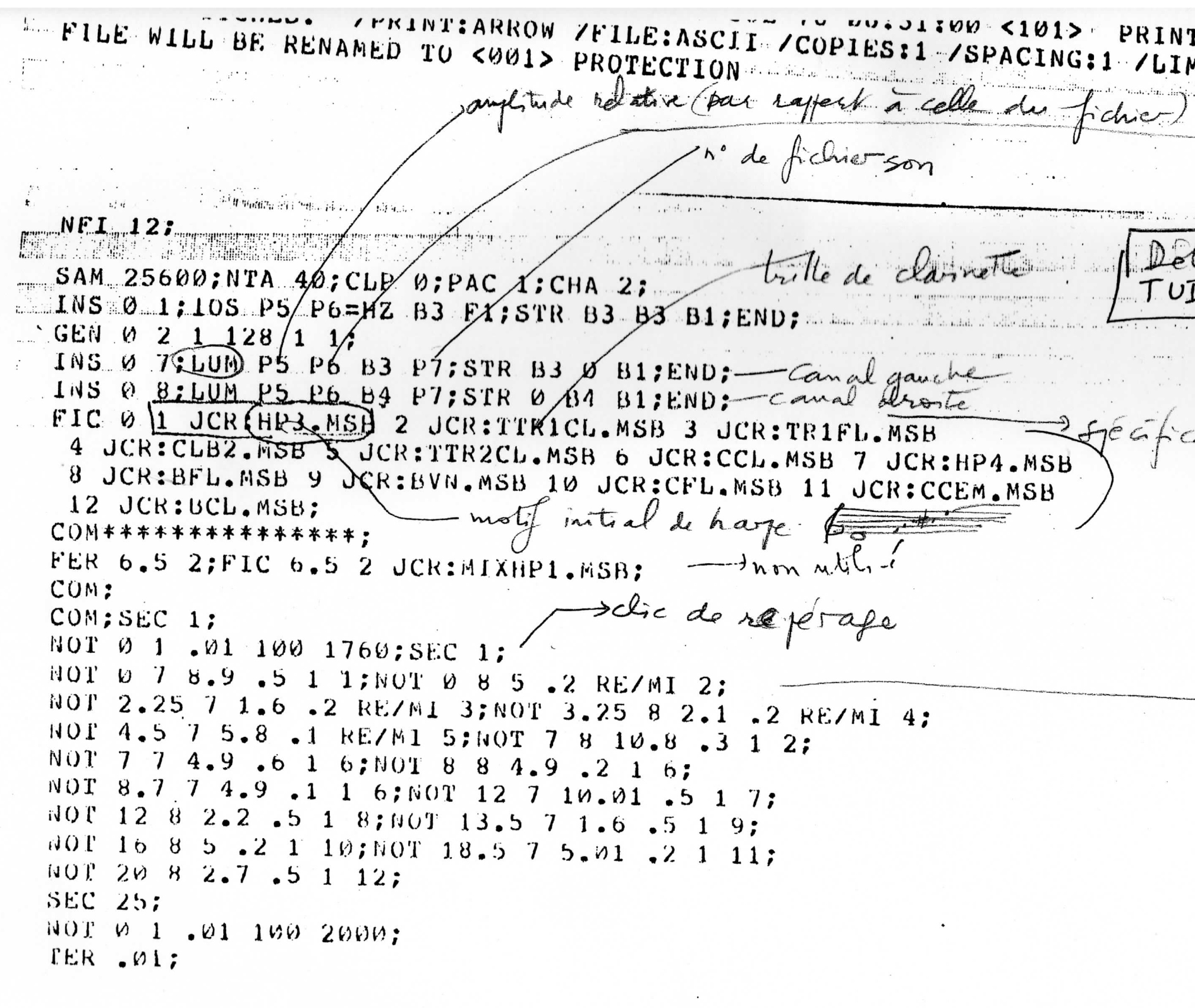

Figure 5. Music V code for the opening of Songes [© Arch. PRISM – Risset].

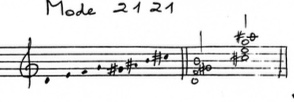

Figure 5 shows to the first page of the Music V code—with Risset’s annotations in pencil—of a stereophonic reduction of the opening of Songes [Media 1]. The first part defines the global parameters, such as the sample rate (“SAM 25600” = 25600 Hz) and the number of channels (“CHA 2”). Next, “twin” instruments are constructed (“INS 0 8 et INS 0 7”—the first for the left channel and the second for the right); these instruments perform the same task, namely, to read sound files loaded into the computer’s memory. “LUM” (a module developed by Jean-Louis Richer, a researcher who worked at IRCAM from 1977 to 1981) plays back sound files loaded by the “FIC” module; in this case, the files are defined as “JCR:HP3.MSB.” Finally, a list of instances makes it possible to play audio files chronometrically; for example, the line “NOT 0 7 8.9 .5 1 1” executes at second “0,” instructing instrument “7” (set to output via the right channel) to begin playback of the file “JCR:HP3.MSB” (opening harp motif) in “8.9” seconds with the amplitude multiplied by “0.5” and the frequency multiplied by “1” (in other words, at half the original volume and without frequency shift). The line “NOT 0 8 5 .2 RE/MI 2” introduces a frequency change, transposing the trill in the clarinet sample (“JCR:TT1CL.MSB”) to a C. In order to better represent the sonic result of this code, Risset transcribed it using standard notation [Figure 6].

Figure 6. Sketch of the opening passage of Songes [© Arch. PRISM – Risset]. This is a representative transcription of the output of the Music V code.

Media 1. Songes, beginning of the first section (00’00”-00’22”) [© EA CD Wergo, 1988].

In the second passage of the first part of the piece [Media 2], sound files are overlapped with one another, thereby prolonging the duration. The effect of the spatial assignments of the sounds is striking. In the line of code “NOT 3.2 7 4.1 .1 RE/MI 4; NOT 3.3 8 4.1 .1 RE/MI 4,” the “JCR.TR2AL.MSB” sound file (a trill on a viola) is read by both the left and right instruments, but with a slight offset (100 milliseconds) in terms of timing. Instrument “7” (left channel) is triggered at “3.2” seconds, and instrument “8” (right channel) at “3.3” seconds. It is important to note that to load the various sound files as the code progresses, it is necessary to free up space in the system memory. For example, this passage begins by playing back the file “JCR:TR1AL.MSB,” which occupies position “1” (“FIC 0 1”) in the RAM; after 12 seconds (“FIC 12 1”), the file “JCR:TB2.MSB” replaces the former at the same location in the memory. This was necessary because, at the time of the work’s creation, there were severe limitations (in comparison to today) in terms of memory capacity and CPU processing power.

Media 2. Example of overlapping sound files in the first part of Songes (0’46”–1’03”). [© EA CD Wergo, 1995]. The green and blue markings correspond to the left and right output channels, respectively.

Timbral Space

Organisation of the instrumental material

Consistent with his enduring preoccupation with the fertile relationship between “real” and “simulated” sounds [Risset, 1988; 1996], in this work, Risset attempted to bring the sounds of acoustic instruments and those produced by digital syntheses, i.e., two ostensibly disparate sound worlds, closer together. In the first section of the piece, the instrumental sounds are clearly recognisable. Nonetheless, that is not to say that they are presented in an unaltered form. At the beginning of the work, we do not hear a harp per se, but rather, a sonic object resulting from digital treatments of a recording thereof. Thus, even if the sound world is a representation of the real, it is nonetheless the result of digital processes of transformation, which characterise the work globally.

Risset re-uses certain passages recorded for the mixed-music work Mirages, i.e., the trills [Figure 7] which feature prominently in the passage of overlapped sounds [Media 2], and the short instrumental motifs [Figure 8]. Trills played by numerous instruments (violin, cello, viola, harp, horn, bassoon, flute, oboe, trombone and clarinet) were recorded, whereas the motifs were recorded by just a few, most notably the flute [Figure 9].

The intervallic basis of these motifs is the T-S-T-S (octatonic) mode [Figure 10]. This scale, used by composers such as Rimsky-Korsakov and Olivier Messiaen, capitalises upon the effect of alternating tones and semitones. Beginning with a D, the second pitch is a tone higher (E); the next is a semitone higher (F), then a tone higher again (G), etc. Nonetheless, Risset was not interested in using this scale to generate melodic material; rather, he treated it as a basis for the creation of timbres. The same approach was adopted in Mutations, where a quasi-dodecaphonic sequence [Figure 8] was not treated as a system in its own right, but rather, as a means to generate timbre.

Figure 7. Trills recorded for Mirages and subsequently re-used in Songes [© Arch. PRISM – Risset].

Figure 8. Motifs recorded for Mirages and subsequently re-used in Songes [© Arch. PRISM – Risset].

Figure 9. Motifs recorded exclusively on flute [© Arch. PRISM – Risset].

Figure 10. The octatonic scale, used in Mirages and in Songes [© Arch. PRISM – Risset].

The “Esquisses” Software

In 1978, while Risset was working on Mirages, he became interested in research being led by John Grey and David Wessel on the concept of timbral space [Wessel, 1978]. The “Esquisses” software, developed at IRCAM by Wessel and Bennett Smith, served as a “sketch book” with which to categorise timbres. Initially called “Sketchpad,” this programme allowed “musicians to obtain a representation of the subjective, perceptible relationships among musical elements, and […] may be used as a kind of sketchbook” [Risset, 1991, p. 279]. It provided Cartesian representations of multiple sound samples (although representations in several dimensions were possible, the x and y axes were sufficient in most cases). The first experiments performed by Wessel used 24 samples, each of which corresponded to a recording of the same musical entity, i.e., an Eb played in the same mid-register, with the same duration and volume, but on different instruments. Using auditory criteria, it was possible to classify these samples on a subjective scale from 1 to 10 (0 for identical and 10 for maximally different), and on a second scale according to the intensity of the onset (transient) of the note. The x axis described spectral energy, i.e., “brilliance,” while the y axis represented the nature of the transient.

Figure 11. A “map” of sounds used in Songes, devised using the “Esquisses” software [© Arch. PRISM – Risset].

The “sonic map” used in Songes with the instrumental motifs taken from Mirages [Figure 11] allowed Risset to “position” the various trill samples in an acoustic space. Point “V” (harp), for example, is relatively high on the y axis, indicating that it is a bright sound. In contrast, point “L” (trombone I) is far lower, and is therefore more mellow in nature. Point “L” (trombone II) is low and farthest to the right, indicating that it has a strong transient but is lacking brilliance. As shown, string instruments (harp, violin, viola and cello) occupy the left half of the graph, indicating more attenuated transients in those sounds than the wind instruments (flute, oboe, clarinet, bassoon and oboe), which are positioned on the right-hand side.

This “map” allowed the composer to use timbre as a discrete musical parameter, much like pitch, for example, with the objective of achieving transpositions of Klangfarbenmelodien in the same way that one can transpose the pitches of a melodic sequence. To this end, it was necessary to define “timbral intervals” which could then be transposed while maintaining the same relationships to each other. In the above example, Risset added a line, or vector, between points “U” (harp) and “O” (viola), corresponding to a “timbral interval.” The same “interval” was found to exist between points “H” (flute) and “D” (horn). As such, the composer applied timbral transpositions in a manner akin to traditional transpositions of pitch. Nonetheless, the result of this process was not entirely to Risset’s satisfaction; he found that such transpositions were not as perceptible to the ear as those applied to pitch. As Risset depended upon his ear to evaluate the efficacy of any musical operation [Risset, 1988], he decided against structuring his work according to this criterion [Guillot, 2008, p. 86].

Simulacra of Bells

Morphology

Simulacra of bells are undoubtedly among the most emblematic sonorities in Risset’s music. From the time of his first experiments with synthesis, he attempted to understand the spectral characteristics of “real” sounds by studying the timbres of brass instruments such as the trumpet [Risset, 1966], as well as those of bells. The objets sonores he created through this research feature prominently in his music, from Computer Suite for Little Boy (1968) to Resonant Sound Spaces (2001-02). The publication of the original codes in his library of synthesised sounds [Risset, 1969] and elsewhere, as well as analyses of pieces which used digital technologies [Lorrain, 1980], allow us to examine the morphologies of these sound objects, the evolution of Risset’s coding and the way in which he re-appropriated his own material throughout his career.

The second part of Songes makes heavy use of simulated bells [Media 3]. The code to generate these sounds may be divided into two basic parts [Figure 12]: a) definitions of objects, and b) commands to trigger those objects at specific points in time. Using the “COM” module, the composer created two synthetic bell sounds: “430 Bell” [Media 4] and “420 Gong” [Media 5]. He added commentaries in pencil on the characteristics of these, as shown in Figure 12.

Media 3. Songes, beginning of the second part, “Cloches” (01’37’’-01’59) [© EA CD Wergo, 1988]

Figure 12. Music V code for the second part of Songes [© Arch. PRISM – Risset]

Media 4. “430 Bell” from Risset’s sound library [© EA CD Wergo, 1995]

Média 5. “420 Gong” from Risset’s sound library [© EA CD Wergo, 1995]

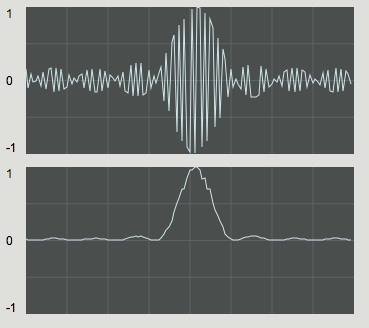

These objects are testaments of the research undertaken by Risset—on sound synthesis, but also in the field of psychoacoustics—in the 1960s. All harmonic sounds may be decomposed into a list of sinusoidal components, the amplitudes of which determine the timbre. However, this interpretation is incomplete, as timbre also depends on the temporal evolution of the intensity of each component [Figure 13].

Figure 13. Three-dimensional analysis, created by Patrick Sanchez, of a synthesised bell sound. Time and frequency are represented on the horizontal axes, while amplitude is represented vertically [Risset, 2001, p. 136].

“Cloche 1280” [“Bell 1280”]

To replicate the temporal characteristics of the components of a bell sound, the code made use of “structures” (module “SV1”), a functionality of Music V which made it possible to encapsulate data defining complex sound objects. Inharmonic structure “1280” (a bell-type object) [Figure 14] comprises nine components, of which the frequencies (in Hz), durations (in seconds) and amplitudes (in arbitrary units) are specified in the three columns on the right. The sound is played back by instrument 3 and the perceived pitch corresponds to 349 Hz. The global amplitude is set to “975” and the duration is defined by the longest-lasting component, i.e., 24 seconds.

Figure 14. Music V code of sound object “1280,” used in Inharmonique and re-created here by Denis Lorrain based on the original [Lorrain, 1980].

In Songes, the instruction “PLF 0 6” defines the characteristics of the structure of “1280”. The line “PLF 0 6 2 1280 0 0 0 0 3; NOT 16 1 0 100 Sol#; NOT 16.2 1 0 100 FA” [Figure 12b] indicates that the two “NOT” instructions should apply to “1280” via instrument “1,” with an overall volume of “100.” The first “NOT” is triggered at moment “16” and the second at “16.2.” The figure “0” indicates that the defined duration has not been modified. Since the reference amplitude of “NOT” is “100,” the amplitudes of the components, as defined in “SV1 0 1280,” will be multiplied by 100/975, whereby 100 is the reference amplitude and 975 is the global amplitude of structure “1280.” Finally, the first “NOT” is transposed to G# (415.3 Hz) and the second to F (349 Hz); as such, all of the component frequencies are multiplied by 415.3/349 or 349/349 for the former and latter, respectively.

With the original codes, as well as other information made available by the composer, it is possible to programme the same operations in a more contemporary coding language (Music V became obsolete in the 1980s, being largely superseded by programmes such as CMusic and CSounds). While composing Resonant Sound Spaces, for example, Risset used a Max patch created by Antonio de Sousa Dias, importing the original Music V code to synthesise sounds from his library in real time [Risset et al., 2002]. Applying the same approach, we have created various web applications using the Faust language which are capable of resynthesising/emulating the sound objects created by Risset [Interactive application 1].

Interactive application 1. Resynthesis/emulations of “Bell 1280” using the Faust language, allowing control over the amplitudes, durations and frequencies.

In early days of digital sound synthesis, the creation of sounds proved to be an onerous task, as computer mainframes were slow and difficult to operate. Interactions between humans and computers took place in deferred time, and there were often long delays between the execution of a command (via punch card), the loading of digital data and finally, hearing the compiled result. This constraint influenced work methods; as such, it was not uncommon to reuse material verbatim from one work to another.

The sound objects with “SV1” structures, as well as the “PLF 6” algorithms, which are able to read and create new structures, remained essentially unaltered throughout Risset’s career, even after punch cards had become obsolete. As such, excerpts of code were re-used in multiple works; for example, structures “1210”, “1250”, “1280”, “1320”, “1360” and “1400” in Songes had been used previously in Inharmonique, notably in the passage labelled “BELLSB,” as analysed by Lorrain [1980]. In contrast, objects “1100” and “1150,” present in the first part of Songes and constructed using the octatonic scale [Figure 12a], were original. In this analysis, we name these objects “Mirage Bell 1” and “Mirage Bell 2”.

“Mirage Bells 1 and 2”

In the original code [Figure 12a], “Mirage Bell 1” [Figure 15] corresponds to the structure “SV1 0 1100,” containing 8 component frequencies of which only the first four are defined. After having analysed “Mirage Bell 2” [Figure 16], which corresponds to structure “SV1 0 1150”, it was possible to determine the missing components of the former, as these were also based on the T-S-T-S intervallic sequence. The structures of these bell objects were recreated in Faust. With the ability to control each spectral component, it is possible to reproduce Risset’s sounds, as well as to create variants thereof [Interactive application 2].

Figure 15. “Mirage Bell 1”. The part highlighted in yellow is based on an analysis of “Mirage Bell 2”.

Figure 16. “Mirage Bell 2”..

Interactive application 2. Resynthesis/emulation of the “Mirage Bell” objects in the Faust language, allowing control over the frequency and amplitude of each of the 8 spectral components.

Transpositions and mutations

In Songes, the octatonic mode is not only used to construct timbres; Risset also applies it to transpose his objets sonores and to create connections among varying morphologies. For example, at the beginning of the second part of the work, this mode is used to structure the overlapping melodic/instrumental motifs and the simulated bell sounds [Media 6]. Sub-routine “PLF 6” (described above) applies a global transposition; by changing the frequency of the object, all associated spectral components undergo the corresponding transposition.

Media 6. Songes, beginning of the second part (1’37–1’59), demonstrating the transposition of objets sonores resulting from the first page of Music V code. Each object contained within the “SV1” structures is called up, played by one of the instruments and output via the left (green) or right (blue) channels [© EA CD Wergo, 1995 / Arch. PRISM – Risset].

Using additive synthesis, Risset was able to transform simulated bell sounds into dynamic textures by controlling the evolution of the intensities of each spectral component thereof. In the second part of Songes, the synthesised gong and bell sounds progressively dissolve via the application of non-synchronous envelopes. As a result, each individual component becomes momentarily audible, creating an arpeggio-like effect [Risset, 1989]. Mutations also makes use of such a process, presaging the spectral techniques of the 1970s. At the beginning of that work, a melodic motif is homogenised into a harmony, which then evolves into a timbre, like that of a gong [Media 7].

Media 7. Opening of Mutations (00’00”-00’10”) [© EA CD Ina/GRM, 1987].

In Songes, Risset transforms the initial morphologies of the components of synthesised bells, causing the sound to evolve into a vivid texture. Then, these textural components are further transformed into harmonic contours, i.e., “frequency profiles” with upward motion (this technique is applied and further developed in the “Coda”). The intervallic relationships in these textures are the same as those used in the opening section (“Mirages”). The spectral analysis presented below [Media 8] illustrates this process: interpolation between bell sounds and fluid textures, all while conserving the octatonic intervallic structure.

Media 8. Sonic mutation in the second part of Songes (3’08—3’32) : bell → bell-like textures → textures-harmonic contour → harmonic contour → octatonic mode-texture [© EA CD Wergo, 1995].

The stylistic unity of the “coda”

Frequency contouring

Only two techniques—frequency contouring and phasing—were used by Risset in the third part of Songes, conferring upon this “coda” a high degree of stylistic unity. The construction of frequency contours involves the creation of ascending and descending frequency profiles, a technique that the composer further explored in Contours [Risset, 1990, p.6]. The code which achieves this represents one of the most unique algorithms in Songes. The composer used additive synthesis in a totally original way, i.e., not to create new sonic entities, but to define frequency profiles which follow periodic waveforms. The “BDS4” algorithm is applied to “twin” instruments (“INS 2” and “INS 3”), as well as to a third instrument dedicated to reverb (“INS 50”) [Figure 17]. A block diagram is included below to make this algorithmic network more comprehensible [Figure 18].

Figure 17. Music V code controlling the frequency contour [© Arch. PRISM – Risset].

Figure 18. Block diagram of algorithm “BDS4”.

Oscillator (a) operates at frequency “p8,” with a waveform that is subsequently multiplied by itself (b). By applying a power-of-two to a waveform, only positive values are returned [Figure 19]. At the output of (b), a periodic signal returns values between 0 and 1 that are then multiplied by fixed value “p6,” which defines the axis of the waveform. In the first iteration of the “BDS4” algorithm, “p6” is set at 7,500 Hz, or the pitch B7. Oscillator (d) and offset (e)—a value which defines the “depth” of the oscillator—are capable of modulating “p6.” However, Risset did not apply such a modulation here, preferring to leave frequency “p10” set at 0 Hz. In this way, the oscillator generates a sinusoid with the frequency of module “MLT” (c) [Figure 17]. This algorithm generates a frequency contour which is evocative of birdsong.

Figure 19. Creation of a unipolar waveform by multiplying the original waveform by itself.

The complexity of the above algorithm may be attributed to the limitations of the Music V software. With the tools that are available today, the same result could be obtained relatively easily [Interactive application 3]. At the time of the creation of Songes, the RAM and storage capacity on computers were extremely limited, and mathematical operations were confined to relatively basic functions. Under such circumstances, additive synthesis was a viable means to obtain satisfactory frequency contours. In the original “BDS4” code, five wave forms were used to create the desired frequency contours [Figures 20 et 21]; however, in the “coda” of Songes, Risset used only the first.

Interactive application 3. Resynthesis/emulation of frequency contours in the Faust language, allowing discrete control over the frequency and amplitude, frequency offset, panning and reverb of each oscillator.

Figure 20. Code for the oscillator waveforms (a) [© Arch. PRISM – Risset].

Figure 21. The five waveforms associated with oscillator (a).

Low-register pedal tone with phasing

Phasing creates beating by superimposing two or more similar frequencies. The pedal tone which appears in the “coda” (a low Bb, i.e., 58 Hz) makes use of this effect in a manner that is “quite refined, achieved in a simple way through precise control of the digital synthesis” [Lorrain, 1980]. Unfortunately, the original Music V code for this material has been lost. As such, in this analysis, we examined a similar passage in Inharmonique whereby Risset applied the same effect, which he named “PHASE6” [Lorrain, 1980]. The code in question produces the same pitch which, in Songes, is enriched through the addition eight neighbouring frequencies (varying from the central pitch by 0.01 to 0.04 Hz). In Inharmonique, Risset used nine parallel oscillators to generate nine components for each event [Figure 22]. The frequency of the first oscillator corresponds to Bb (58 Hz), while the others are slightly higher or lower according to a fixed interval. For example, if the interval is set at 0.01 Hz, the frequencies of the oscillators will be 58.01 Hz, 57.99 Hz, 58.02 Hz, 57.98 Hz, etc. This results in a “cascade” of slightly divergent harmonics which produces a phasing effect, alternately amplifying and attenuating the intensity of the signal [Interactive application 4].

Figure 22. Block diagram of “PHASE6” in Inharmonique [Lorrain, 1980].

Interactive application 4. Emulation of the phasing effect in Faust, allowing control of the reference frequency and the divergent interval.

Composing space

Notwithstanding the fact that the spatial dimension is a foundation of all electronic and electroacoustic music, the treatment of space in Songes plays a key role in the work’s unity, in the sense that such processes are active throughout the entirety of the work. As stated above, to achieve striking stereophonic effects, Risset employed “twin” instruments, the intensities of which are meticulously controlled in order to establish and vary the positions of virtual sound sources. Let’s return briefly to the first listening example in this analysis [Media 1], i.e., the opening seconds of Songes. In order to elucidate the spatialisation processes applied to each objet sonore, i.e., positioning sounds a certain amount to the left or right, the relevant excerpts of the Music V code have been highlighted in different colours, and the corresponding passages transcribed using traditional musical notation [Media 9].

Media 9. Songes, opening passage (00’00”-00’22”) [© EA CD Wergo, 1988 / Arch. PRISM – Risset] with animations applied to the Music V code and a transcription in traditional musical notation. The parts highlighted in green and blue correspond to instruments sent to the left and right outputs, respectively.

In the “Coda”, Risset applies “panoramic” effects to create spatial trajectories which are far more complex than anything used in the first part of the work. He drew inspiration for this from the pioneering work of his friend and colleague, John Chowning, who had created a spatialisation algorithm in the early-1970s which made it possible to achieve extremely robust illusions of sonic space [Chowning, 1971]. In the code used to synthesise the frequency contours [Figure 17], and as shown in the block diagram representation thereof [Figure 18], two instruments are simultaneously active (line 10 “INS 0 2” and line 15 “INS 0 3”). The first outputs a signal to the left channel and the second to the right; the first instructs oscillator (j) to use waveform “f7” [Figure 23, upper], while the second instructs oscillator (k) to use waveform “f8” [Figure 23, lower]. As there are two opposing waveforms, when the energy of one oscillator increases, it is simultaneously reduced to zero in the other. The result is a progressive transition from left to right. Before entering (j) and (k), an identical signal is output from oscillator (h) which is directed to (i), where two reverb modules are active.

Figure 23. Approximated waveforms illustrating the panning effect produced by oscillators (j) and (k) in the “BDS4” algorithm.

At the conclusion of Songes, the sonic trajectories become more and more complex, creating a deceptive space that brings to mind the paradoxical sounds which Risset later developed based on his scientific and musical research [Risset, 1978a; 1978b]. The transition from a realistic world to one which is wholly illusory, such is the musical journey that is Songes.

Conclusion

Songes marks the pinnacle of Risset’s first creative period (1964 to 1979). It encapsulates all of the composer’s priorities and concerns during this time: his reticence towards real-time processes; the re-appropriation of code used in previous works; research into the psychoacoustic effects of ambiguous harmonies and timbres constructed using timbre-generating intervallic relationships; the creation of bell simulacra and their transformation into fluid textures; and the exploration of Chowning’s spatial illusions. Nonetheless, Songes also signalled the beginning of Risset’s second compositional period (1980 to 1991), in which he embraced pre-recorded sounds for the conception of new, dream-like sonic worlds. Risset adopted the position of psychologist, Jan Evangelista Purkyně, for whom illusions are “sensorial errors but perceptual truths.” While illusions are ubiquitous in Risset’s musical and scientific corpus, they are achieved in Songes without the use of the real-time technologies—such as Disklaviers coupled with Max/MSP—that he would adopt in his third and final compositional period (1991 to 2016).

Access to Risset’s computer codes is essential for analyses—and to gain a proper appreciation of the creative process—of his works. The emulations of Risset’s synthesis objects, created here in the Faust language, are intended not only to illustrate his compositional processes, but also to facilitate access to original material generated in the Music V language [Svidzinski et Bonardi, 2018]. This approach is in line with the wishes of Jean-Claude Risset, who always strived to deepen interactions between art, science and technology.

Resources

Textes

[Chion, 1988] – Michel Chion, “Les deux espaces de la musique concrète”, in Francis Dhomont (éd.), Lien – revue d’esthétique musicale, “L’espace du son I”, Ohain: Éditions Musiques et Recherches, 1988, p. 31-33.

[Chowning, 1971] – John Chowning, “The Simulation of Moving Sound Sources”, Journal of Audio Engineering Society, vol.10, 1971, pp. 2-6. (Republished in Computer Music Journal, vol.1 No. 3, 1977, pp. 48-52).

[Di Scipio, 2000] – Agostino Di Scipio, “An analysis of Jean-Claude Risset’s Contours”, Journal of New Music Research, vol.29 no. 1, 2000, p. 1-21.

[Di Scipio, 2002] – Agostino Di Scipio, “A Story of emergence and Dissolution: Analytical Sketches of Jean-Claude Risset’s Contours”, in Thomas Licata (éd.), Electroacoustic Music Analytical Perspectives, Westport: Greenwood Press, 2002, p. 151-186.

[Guillot, 2008] – Mathieu Guillot, Jean-Claude Risset : Du Songe au son, Paris: L’Harmattan, 2008.

[Koblyakov, 1984] – Lev Koblyakov, “Jean-Claude Risset : Songes, 1979”, Contemporary Music Review, vol.1 no. 1, 1984, p. 183-185. (Translated into French by Marie-Stella Pari and published in L’Ircam, une pensée musicale, Paris: Éditions des Archives Contemporaines, p. 183-185.

[Lorrain, 1980] – Denis Lorrain, “Inharmonique : analyse de la bande magnétique de l’œuvre de J.-C. Risset”, IRCAM Activities Report, No. 26, Paris – Centre Georges Pompidou, 1980 (online: http://articles.ircam.fr/textes/Lorrain80a/).

[Mathews, 1963] – Max Mathews, “The Digital Computer as a Musical instrument”, Science – New Series, vol.142 no. 3592, 1963, p. 553-557. (Republished in the booklet of the CD Computer Music Currents 13 – The Historical CD of Digital Sound Synthesis, Wergo 282 033-2, 1995).

[Mathews, 1969] – Max Mathews (éd.), The Technology of Computer Music, Cambridge: M.I.T. Press, 1969.

[Risset, 1966] – Jean-Claude Risset, Computer Study of Trumpet Tones, New Jersey: Prentice Hall / Englewood Cliffs, 1966.

[Risset, 1969] – Jean-Claude Risset, An Introductory Catalogue of Computer Synthesized Sounds, New Jersey: Murray Hill, 1969. (Republished in the booklet of the CD Computer Music Currents 13 – The Historical CD of Digital Sound Synthesis, Wergo WER 282 033-2, 1995).

[Risset, 1978a] – Jean-Claude Risset, “Paradoxes de hauteur”, IRCAM Activities Report, No. 10, 1978 (online: http://articles.ircam.fr/textes/Risset78a/index.html).

[Risset, 1978b] – Jean-Claude Risset, “Hauteur et timbre de sons”, IRCAM Activities Report, No. 11, 1978 (online: http://articles.ircam.fr/textes/Risset78a/index.html).

[Risset, 1978c] – Programme note for Mirages (online: https://brahms.ircam.fr/works/work/11500/#program).

[Risset, 1986] – Jean-Claude Risset, “Timbre et synthèse des sons”, Analyse Musicale, No. 3, 1986, p. 9-20.

[Risset, 1988] – Jean-Claude Risset, “Perception, environnement, musiques”, InHarmoniques, No. 3 “Musique et perception”, Paris: IRCAM / Christian Bourgois, 1988, p. 10-42.

[Risset, 1989] – Jean-Claude Risset, “Paradoxical Sounds / Additive Synthesis of Inharmonic Tones”, Max Mathews and John Pierce (éds.), Current Directions in Computer Music Research, M.I.T. Press, 1989, p. 149-163.

[Risset, 1990] – Jean-Claude Risset, “Composer le son : expériences avec l’ordinateur, 1964-1989”, Contrechamps, No. 11, 1990, pp. 107-126.

[Risset, 1991] – Jean-Claude Risset, “Musique, recherche, théorie, espace, chaos”, InHarmoniques, No. 8/9, 1991, p. 272-316.

[Risset, 1996] – Jean-Claude Risset, “Realworld Sounds and Simulacra in my Computer Music”, Contemporary Music Review, vol.15 No. 1-2, 1996, p. 29-48.

[Risset, 2001] – Jean-Claude Risset, “Problèmes posés par l’analyse d’œuvres musicales dont la réalisation fait appel à l’informatique”, in Analyse et création musicale (Proceedings of the 3rd European Musical Analysis Conference, Montpellier, 1995), Paris: L’Harmattan, 2001, p. 131-160.

[Risset et al., 2002] – Jean-Claude Risset, Daniel Arfib, António de Sousa Dias, Denis Lorrain and Laurent Pottier. “De Inharmonique à Resonant Sound Space : temps réel et mise en espace”, Proceedings of the 19th Journées d’Informatique Musicale, Marseille, May 29-31 2002 (online: http://jim.afim-asso.org/jim2002/articles/L10_Risset.pdf).

[Stroppa, 1984] – Marco Stroppa, “Sur l’analyse de la musique électronique”, in L’Ircam, une pensée musicale, Paris: Éditions des Archives Contemporaines, 1984, p. 187-93. (English translation: “The Analysis of Electronic Music”, Contemporary Music Review, vol.1 No. 1 “Musical Thought at IRCAM”, 1994, p. 175-180).

[Svidzinski et Bonardi, 2018] – João Svidzinski et Alain Bonardi, “Héritage et appropriation analytiques de Jean-Claude Risset : un exemple de modélisation en langage Faust des codes Music V de Songes (1979)”, Proceedings from Rencontres internationales du Collegium Musicæ – Jean-Claude Risset Interdisciplinarités, Paris: IRCAM, 2018 (video online : https://medias.ircam.fr/embed/media/x5f02f2).

[Svidzinski, 2018] – João Svidzinski, Modélisation orientée objet-opératoire pour l’analyse et la composition du répertoire musical numérique, Doctoral Thesis, Paris 8 University, 2018 (online : https://hal.archives-ouvertes.fr/tel-02045765).

[Rix, 2012] – Florence Rix, Songes (1979) de Jean-Claude Risset – Analyse de la pièce et étude des techniques DSP, Master Dissertation, Jean Monnet University- Saint Etienne, June 2012.

[Wessel, 1978] – David Wessel, “Timbre Space as a Musical Control Structure”, ”, IRCAM Activities Report, No. 12, 1978 (online : http://articles.ircam.fr/textes/Wessel78a/).

[Veitl, 2010] – Anne Veitl, Falling notes / La chute des notes, Delatour: Sampzon, 2010.

Archives

[Arch. PRISM – Risset] – Fonds Jean-Claude Risset, Laboratoire PRISM (UMR 7061 – France). Copyright applies to all documents digitized by the PRISM Laboratory.

Audio Recordings

[EA CD Ina/GRM, 1987] – Jean-Claude Risset, Sud ; Dialogues ; Inharmonique; Mutations, Ina C 1003, 1987]

[EA CD Wergo, 1988] – Jean-Claude Risset, Songes ; Passages ; Little Boy ; Sud, Wergo WER 2013-50, 1988.

[EA CD Wergo, 1995] – Jean-Claude Risset, An introductory Catalogue of Computer Synthesized Sounds, Wergo WER 2033-2, 1995.

[EA Inédit Ina] – Jean-Claude Risset, Songes (four-track version in aiff format, 44,1kHz, 16bit).

Enregistrements vidéo

[EV Risset, 2016] – Jean-Claude Risset, interview before the Concert Multiphonies (Ina-GRM) on 24 January, 2016 (online: https://www.youtube.com/watch?v=-pkTEdxKfRg).

[EV Dars et Papillault, 1999] – Jean-François Dars and Anne Papillault, Jean-Claude Risset, médaille d’Or du CNRS 1999, DVD CNRS (online: https://images.cnrs.fr/video/394).

Acknowledgments and Citations

Many thanks to Jean-Claude Risset in memoriam, for having made his archives on Songes available to us before his death in 2016.

To cite this article:

João Svidzinski and Vincent Tiffon, “Jean-Claude Risset – Songes”, ANALYSES – Œuvres commentées du répertoire de l’Ircam [En ligne], 2021. URL : https://brahms.ircam.fr/analyses/Songes/

Do you notice a mistake?